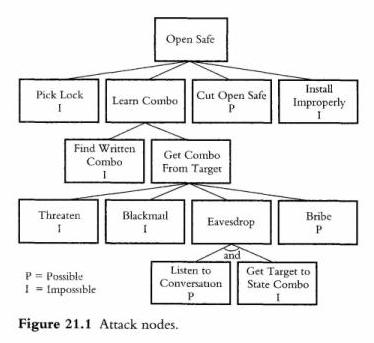

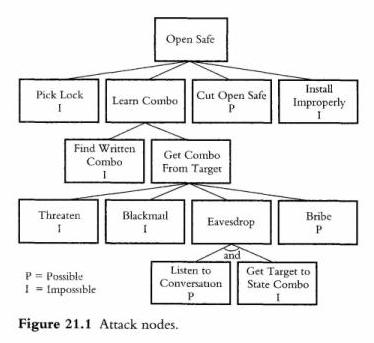

Michael Howard points to a free threat modeling tool written by Frank Swiderski, author of the forthcoming book Threat Modeling. The evolving formal discipline of threat modeling first came to my attention in 2000, when I read Bruce Schneier's Secrets and Lies. This picture, from chapter 21 of that book, is worth a thousand words:

One way to gauge the growing interest in threat modeling -- at Microsoft and elsewhere -- is to compare its coverage in the two editions of Michael Howard's Writing Secure Code (1, 2). In the first edition, threat modeling is mentioned in a section of Chapter 2, Designing Secure Systems. In the second edition, it becomes a chapter in its own right.

Swiderski's tool is a GUI-based .NET app that collects tree-structured information about entry points, protected resources, and threats. If you have the Visio drawing control, you can use that to add data flow diagrams, otherwise the tool includes a simple diagram editor. The classifications defined by the STRIDE methodology -- Spoofing, Tampering, Repudiation, Information Disclosure, Denial of Service, and Elevation of Privilege -- are available as checkboxes. Likewise the classifications defined by the DREAD methodology -- Damage Potential, Reproducibility, Exploitability, Affected Users, Discoverability -- are available as numeric choices (1-10). ("The concepts of STRIDE and DREAD were conceived, built upon, and evangelized at Microsoft by Loren Kohnfelder, Praerit Garg, Jason Garms, and Michael Howard." -- Writing Secure Code, 2nd Edition)

I've always been suspicious of the kinds of software tools that just provide bookkeeping support for some methodology. Of course, a methodology that people can actually understand and use is really just a formalization of common sense, and I think the STRIDE/DREAD stuff falls into that category.

Here's a report on a very simple threat model, generated from the XML data captured by the tool:

Threat Model: XPath Query Service

|

Information |

|

|

Owner |

Jon Udell |

|

Participants

|

|

|

Reviewer |

|

|

Description |

Jon's XPath query service |

|

Threat 1 |

|

|

Name |

Penetration |

|

Description |

|

|

Threat Tree |

|

Threat 2 |

|

|

Name |

Denial of service |

|

Description |

|

|

Threat Tree

|

|

This kind of report, and the process that leads to it, is no more than a framework for thinking through the issues involved in securing an application. But it is also no less than that. If the analytic framework is easy to pick up and use, you'll do more and better analysis. To that end I have a couple of suggestions. One's easy to implement, one's really hard.

Here's the easy one. The built-in report viewer failed when I tried to produce my report. I took a look at the included XSLT stylesheets and found a couple of C# methods defined using the <msxsl:script> mechanism. They weren't doing anything particularly vital, just removing whitespace and truncating strings, so I removed references to them. That enabled me to produce the excerpt shown above using an external XSLT processor, though still not from within the tool itself, for reasons I haven't figured out. I'm sure there's an easy fix for this. But relying by default on a non-standard extension like <msxsl:script> isn't a great public relations move. It encourages people to think the tool is more Microsoft-centric than it in fact is. True, it requires the .NET Framework (v1.1) to run, but the generated XML is entirely neutral. For example, it writes out a data flow diagram two ways: as a Base64 encoding of a bitmapped image, and also as a chunk of SVG that could be useful in all sorts of ways on any platform. My recommendation: make the default XSLT transformations similarly neutral.

Now here's the tough one. The real impediment to doing this kind of analysis is the classic problem of documentation that's not connected to code. It's tedious and boring to enumerate entry points (e.g. network ports, file systems) and their relationships to well-known threats. What are the odds that I'll update my threat model if I change the port on which my service is listening? Slim to none. Of course the code knows what port the service is listening on. What's more, the code can tell us a lot about potential attacks. For example, my XPath query service, written in Python, uses the BaseHTTPServer class. (I do this partly because it's so simple. There's no massive IIS or Apache edifice to worry about, just a small amount of code -- which I've read and which I understand -- that implements an HTTP responder.) Given a database of viable threats to BaseHTTPServer, an automated analysis of the code could fill in parts of the threat model for me. More broadly, automated analysis of the configuration data used by app servers, routers, firewalls, and other infrastructure software could help us automatically populate threat models. That'd be a great way to mine value from the XML that's now routinely used to describe these things. I predict that source-code analysis and configuration-file analysis will help us do more frequent and more reliable threat modeling. It'll be a challenge. But if we know that's where we're headed, we can design source-code metadata mechanisms and configuration-file formats accordingly.

Former URL: http://weblog.infoworld.com/udell/2004/05/25.html#a1008