Five guys talking

Tim Bray raises some good questions about last week's Gillmor Gang episode:

First of all, a transcript would be so much better; I don't have an hour to listen and if I did it would be in my car, and even if I tried, sitting here in my office (even though the audio is excellent) my attention is continually getting pulled away by email or instant messages or red letters in NetNewsWire or whatever. If I'm writing code or a tricky position paper or reading something material or even just thinking about a hard problem I can tune out the distractions no problem, but four guys talking? The mind wanders. [ongoing]

I agree. Doug Kaye is working on providing transcripts, but it's a hard problem and a thankless chore.

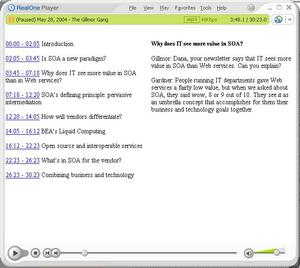

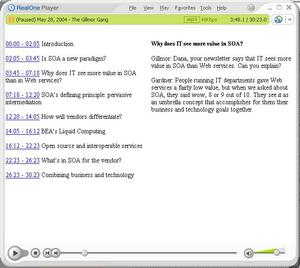

Meanwhile, I've been exploring a middle-ground approach. I went through the first half of the show, in which various aspects of service-oriented architecture were batted around, and added a layer of indexing and annotation. The result: this SMIL presentation for the Real player. Note: Clicking an index link will seek in the audio stream and synch the annotations panel, but (at least for me) won't always actually play the audio at that location unless you click again. (Annoying. Why is that?)

Here's my thinking. Even for big media organizations with big budgets, it's a struggle to get audio transcriptions done quickly and well. But maybe, with the right set of tools, it'd be feasible to create a layer of indexing plus annotation that would contextualize and give meaningful random access to the audio stream.

Here's my thinking. Even for big media organizations with big budgets, it's a struggle to get audio transcriptions done quickly and well. But maybe, with the right set of tools, it'd be feasible to create a layer of indexing plus annotation that would contextualize and give meaningful random access to the audio stream.

Working through the process gave me a clearer sense of what tools we already have, and what tools we'd need to make it practical. A huge enabler is the ability to rely on a standard Web server, rather than a specialized streaming server. Last week, I indexed and annotated a downloadable RealVideo file. The same principle applies to a downloadable MP3, and that's the core of today's experiment.

The challenge then becomes to isolate segments, form links to the beginning of each segment, and pair each audio segment with annotations displayed in another pane. I found the Winamp player really helpful for fine-tuning start/stop times. You can use CTRL-J to jump to a minutes:seconds location, and can use the arrow keys to jump forward or backward in 5-second increments. It gets tedious to subtract by minutes:seconds in order to arrive at durations, but there are calculators that can help with that.

These conveniences only scratch the surface, of course. We're left with plenty of roadblocks. There was nothing to help construct the index, organize the annotations into a set of panes, or orchestrate linking from the index to the annotations. More woes: The result is specific to the Real player. It won't even work in QuickTime, which has SMIL support, never mind in Windows Media Player, which doesn't. Another possible issue: the index and annotations, encapsulated in .smil and .rt (RealText) files respectively, are (I suspect) opaque to Google, which defeats the purpose of using the annotations to make the audio partly searchable. And the elephant in the room: Real's RealText isn't HTML, and the Real player isn't a browser. We can awkwardly include AV content into a text/graphics viewer (i.e., browser), or awkwardly include text and graphics into an AV player, but we've never satisfactorily united the two modes.

Suppose we magically healed this longstanding breach. Suppose further that, in some hypothetical browser/player, we could even author for the combined medium -- for example, by capturing timecoded annotations in realtime, SubEthaEdit-style, or by collecting and presenting the URLs that participants visit during the event. Would the kind of hybrid presentation I'm envisioning still be a poor substitute for a complete transcript? If you had such a transcript, would the audio still be valuable, and if so, in what ways?

I can't answer these questions yet, but it's a fascinating area to explore -- and not only from the perspective of four (actually, five) guys talking on an IT radio show. Think about the meetings you attend. Think about the note-taking that does (or doesn't) occur in those meetings. Imagine being able to efficiently review what was actually said, not just what was summarized, when making decisions. In that situation, a complete transcript -- even if one could be produced cheaply and accurately -- won't tell the whole story. Recorded speech, linked to searchable annotations, would be an amazing enhancement to routine business communication.

Former URL: http://weblog.infoworld.com/udell/2004/06/01.html#a1011

Here's my thinking. Even for big media organizations with big budgets, it's a struggle to get audio transcriptions done quickly and well. But maybe, with the right set of tools, it'd be feasible to create a layer of indexing plus annotation that would contextualize and give meaningful random access to the audio stream.

Here's my thinking. Even for big media organizations with big budgets, it's a struggle to get audio transcriptions done quickly and well. But maybe, with the right set of tools, it'd be feasible to create a layer of indexing plus annotation that would contextualize and give meaningful random access to the audio stream.